Containers Aren't Linux Processes

There are many ways to create containers, especially on Linux and alike. Besides the super widespread Docker implementation, you may have heard about LXC, systemd-nspawn, or maybe even OpenVZ.

The general concept of the container is quite vague. What's true and what's not often depends on the context, but the context itself isn't always given explicitly. For instance, there is a common saying that containers are Linux processes or that containers aren't Virtual Machines. However, the first statement is just an oversimplified attempt to explain Linux containers. And the second statement simply isn't always true.

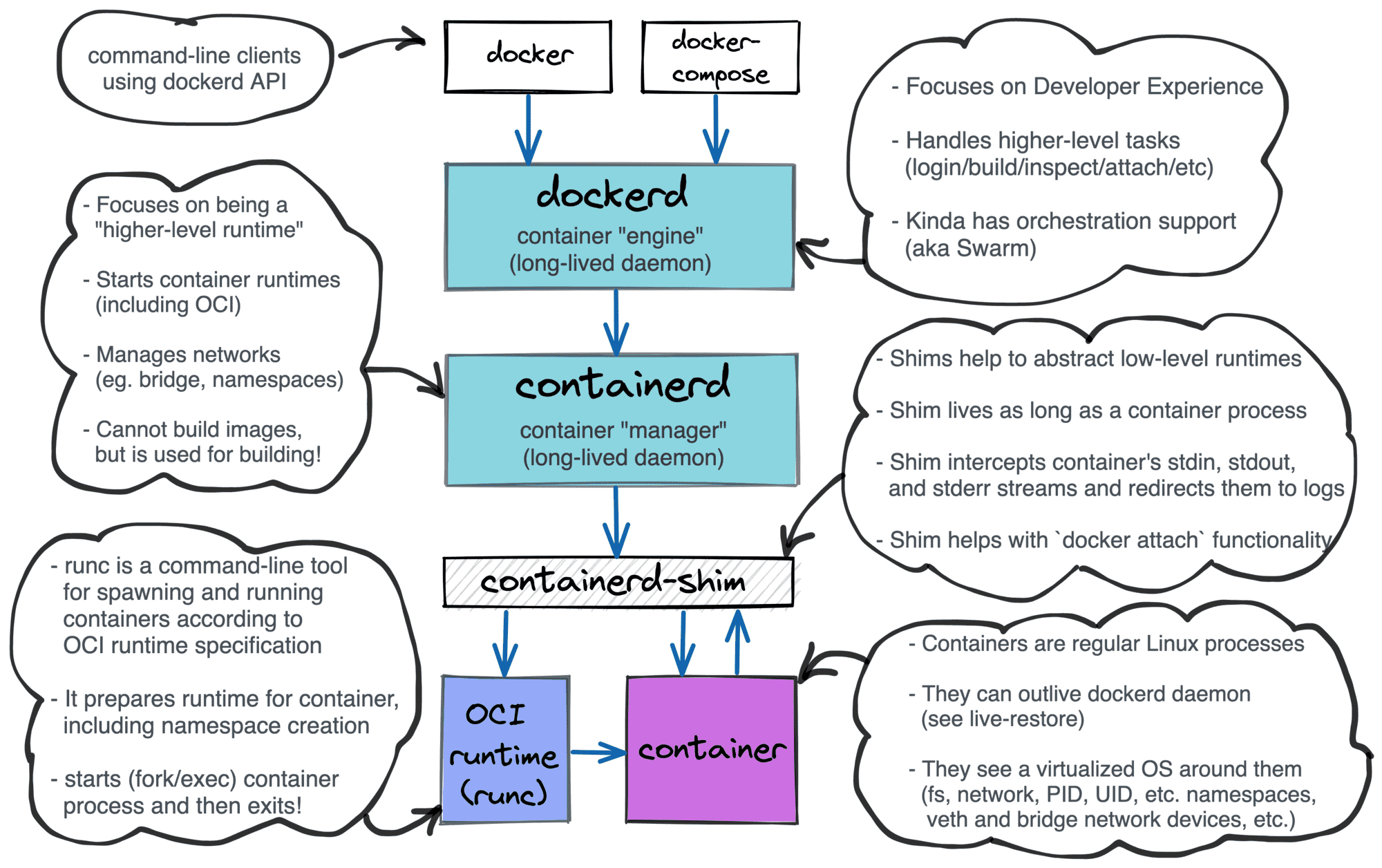

In this article, I'm not trying to review all possible ways of creating containers. Instead, the article is an analysis of the OCI Runtime Specification. The spec turned out to be an insightful read! For instance, it gives a definition of the standard container (and no, it's not a process) and sheds some light on when Virtual Machines can be considered containers.