Multiple Containers, Same Port, no Reverse Proxy...

Even when you have just one physical or virtual server, it's often a good idea to run multiple instances of your application on it. Luckily, when the application is containerized, it's actually relatively simple. With multiple application containers, you get horizontal scaling and a much-needed redundancy for a very little price. Thus, if there is a sudden need for handling more requests, you can adjust the number of containers accordingly. And if one of the containers dies, there are others to handle its traffic share, so your app isn't a SPOF anymore.

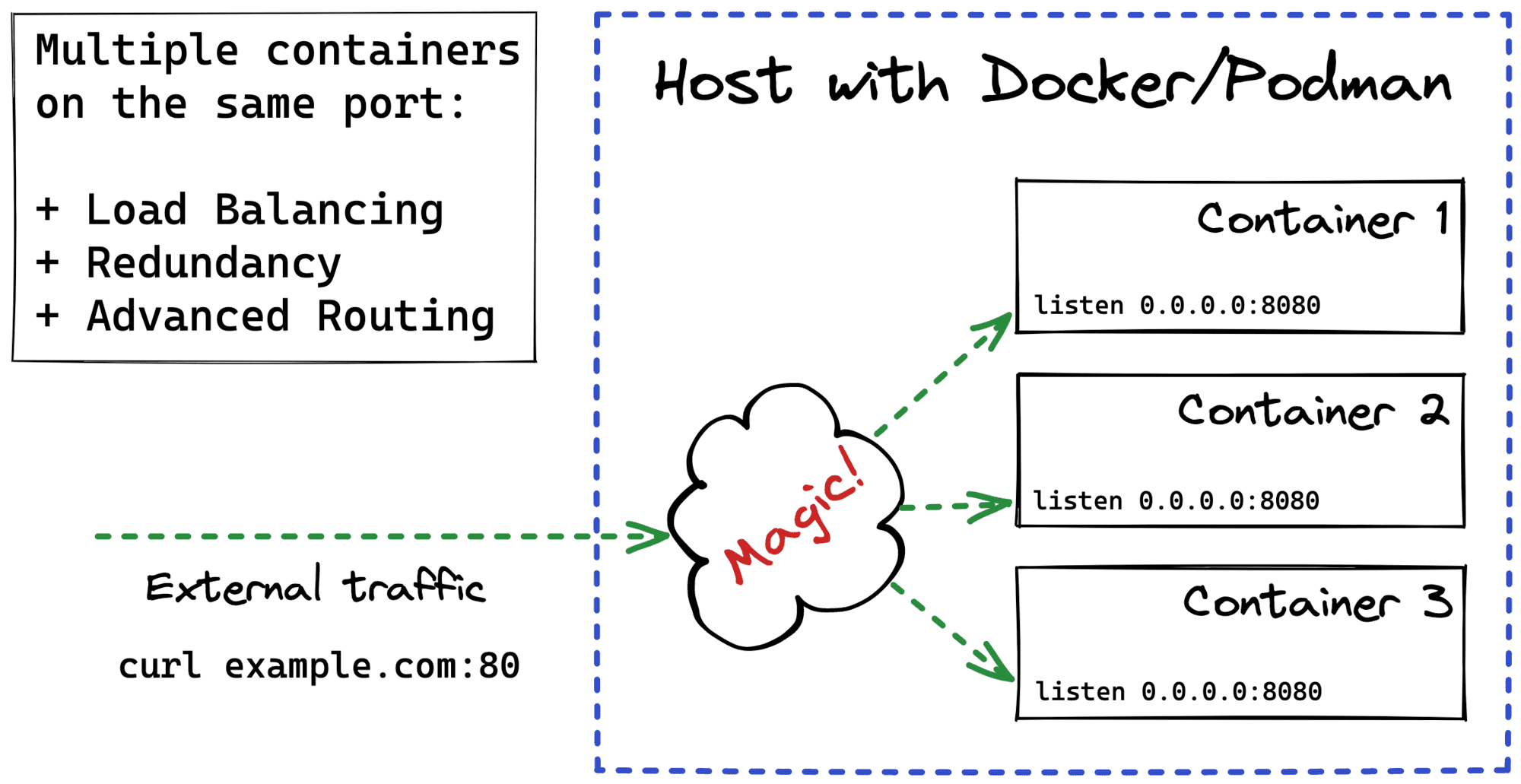

The tricky part here is how to expose such a multi-container application to the clients. Multiple containers mean multiple listening sockets. But most of the time, clients just want to have a single point of entry.