GoogleContainerTools' distroless base images are often mentioned as one of the ways to produce small(er), fast(er), and secure(r) containers. But what are these distroless images, really? Why are they needed? What's the difference between a container built from a distroless base and a container built from scratch? Let's take a deeper look.

Level up your server-side game — join 6,500 engineers getting insightful learning materials straight to their inbox.

Table of Content

- Pitfalls of scratch containers

- Meet the first distroless image - distroless/static

- Not every program is statically linked

- Meet the second distroless image - distroless/base

- Not every dynamically linked use case is the same

- Meet the third distroless image - distroless/cc

- Base images for interpreted or VM-based languages

- Who uses distroless base images

- Pros, cons, and alternatives of distroless images

- Conclusion

- Resources

Pitfalls of scratch containers

A while ago, I was debunking a (mine only?) misconception that every container has an operating system inside. Using an empty (aka scratch) base image, I created a container holding just a single file - a tiny hello-world program. And, to my utter surprise, when I ran it, it worked out well! Besides other things, it allowed me to conclude that having a full-blown Linux distro in a container is not mandatory.

But, as it often happens in labs, that experiment was staged 🙈

To make my point stronger, I deliberately oversimplified the test executable by creating it statically linked and doing nothing but printing a bunch of ASCII characters. So, what if I try to repeat that experiment today but use slightly more involved apps? Will it reveal any non-obvious problems with scratch containers?

Preparing the new stage

The article is going to be a hands-on one (learn by doing == ❤️) with the only prerequisite of having a machine with Docker on it. To make the examples reproducible, I'll write them as multi-staged Dockerfiles (shamelessly abusing the heredoc feature). However, to avoid bloating the article, I'll keep most of the Dockerfiles collapsed by default and highlight only the important parts instead.

Here is the general idea:

# syntax=docker/dockerfile:1.4

# -=== Builder image ===-

FROM golang:1 as builder

WORKDIR /app

COPY <<EOF main.go

package main

import (

...

)

func main() {

<...test program goes here...>

}

EOF

RUN CGO_ENABLED=0 go build main.go

# -=== Target image ===-

FROM scratch

COPY --from=builder /app/main /

CMD ["/main"]

Pitfall 1: Scratch containers miss proper user management

The very first thing I'll try to put into a "from scratch" container is the following Go snippet printing out some information about the current user:

user, err := user.Current()

if err != nil {

panic(err)

}

fmt.Println("UID:", user.Uid)

fmt.Println("GID:", user.Gid)

fmt.Println("Username:", user.Username)

fmt.Println("Name:", user.Name)

fmt.Println("HomeDir:", user.HomeDir)

Click here for the complete scenario 👨🔬

Dockerfile:

# syntax=docker/dockerfile:1.4

# -=== Builder image ===-

FROM golang:1 as builder

WORKDIR /app

COPY <<EOF main.go

package main

import (

"fmt"

"os/user"

)

func main() {

user, err := user.Current()

if err != nil {

panic(err)

}

fmt.Println("UID:", user.Uid)

fmt.Println("GID:", user.Gid)

fmt.Println("Username:", user.Username)

fmt.Println("Name:", user.Name)

fmt.Println("HomeDir:", user.HomeDir)

}

EOF

RUN CGO_ENABLED=0 go build main.go

# -=== Target image ===-

FROM scratch

COPY --from=builder /app/main /

CMD ["/main"]

Build it with:

$ docker buildx build -t scratch-current-user .

Let's try to run it and see if it works:

$ docker run --rm scratch-current-user

panic: user: Current requires cgo or $USER set in environment

goroutine 1 [running]:

main.main()

/app/main.go:11 +0x23c

That's a pity! A failure from the first try. Can it be fixed, though?

The cgo is off the scope here (I intentionally disabled it to avoid the dependency on libc or any other shared libraries), so according to the Go stdlib, the only remaining way to fix the problem is by setting the $USER environment variable:

$ docker run --rm -e USER=root scratch-current-user

UID: 0

GID: 0

Username: root

Name:

HomeDir: /

Seems to work! But containers shouldn't run as root. Can another user be used?

$ docker run --rm -e USER=nonroot scratch-current-user

UID: 0

GID: 0

Username: nonroot

Name:

HomeDir: /

Ah, shoot! The nonroot user also has the UID 0! In other words, it's the same root but in disguise. Maybe using the --user flag will help?

$ docker run --user nonroot --rm scratch-current-user

docker: Error response from daemon:

unable to find user root:

no matching entries in passwd file.

Nope. But Docker gave me a good pointer here - is the passwd file even there?! So, this was my first realization:

The /etc/passwd and /etc/group files are missing in "from scratch" containers.

Placing these two files manually into the end image seems to resolve the issue:

FROM scratch

COPY <<EOF /etc/group

root:x:0:

nonroot:x:65532:

EOF

COPY <<EOF /etc/passwd

root:x:0:0:root:/root:/sbin/nologin

nonroot:x:65532:65532:nonroot:/home/nonroot:/sbin/nologin

EOF

COPY --from=builder /app/main /

CMD ["/main"]

Click here for the complete scenario 👨🔬

Dockerfile:

# syntax=docker/dockerfile:1.4

# -=== Builder image ===-

FROM golang:1 as builder

WORKDIR /app

COPY <<EOF main.go

package main

import (

"fmt"

"os/user"

)

func main() {

user, err := user.Current()

if err != nil {

panic(err)

}

fmt.Println("UID:", user.Uid)

fmt.Println("GID:", user.Gid)

fmt.Println("Username:", user.Username)

fmt.Println("Name:", user.Name)

fmt.Println("HomeDir:", user.HomeDir)

}

EOF

RUN CGO_ENABLED=0 go build main.go

# -=== Target image ===-

FROM scratch

COPY <<EOF /etc/group

root:x:0:

nonroot:x:65532:

EOF

COPY <<EOF /etc/passwd

root:x:0:0:root:/root:/sbin/nologin

nonroot:x:65532:65532:nonroot:/home/nonroot:/sbin/nologin

EOF

COPY --from=builder /app/main /

CMD ["/main"]

Build it with:

$ docker buildx build -t scratch-current-user-fixed .

$ docker run --user root --rm scratch-current-user-fixed

UID: 0

GID: 0

Username: root

Name: root

HomeDir: /root

$ docker run --user nonroot --rm scratch-current-user-fixed

UID: 65532

GID: 65532

Username: nonroot

Name: nonroot

HomeDir: /home/nonroot

Finally, the example works as expected. But manual user management is not fun 🙃

Pitfall 2: Scratch containers miss important folders

Here is another example - it's pretty common for a program to create temporary files and folders:

f, err := os.CreateTemp("", "sample")

if err != nil {

panic(err)

}

fmt.Println("Temporary file:", f.Name())

Click here for the complete scenario 👨🔬

Dockerfile:

# syntax=docker/dockerfile:1.4

# -=== Builder image ===-

FROM golang:1 as builder

WORKDIR /app

COPY <<EOF main.go

package main

import (

"fmt"

"os"

)

func main() {

f, err := os.CreateTemp("", "sample")

if err != nil {

panic(err)

}

fmt.Println("Temporary file:", f.Name())

}

EOF

RUN CGO_ENABLED=0 go build main.go

# -=== Target image ===-

FROM scratch

COPY --from=builder /app/main /

CMD ["/main"]

Build it with:

$ docker buildx build -t scratch-tmp-file .

But apparently, creating a temporary file using the above Go snippet fails in a "from scratch" container:

$ docker run --rm scratch-tmp-file

panic: open /tmp/sample386939664: no such file or directory

goroutine 1 [running]:

main.main()

/app/main.go:11 +0xbc

The fix is simple - make sure the /tmp folder exists in the running container. There are different ways to achieve it, including mounting a folder on the fly. Although it might be annoying to do it manually (don't forget about the sticky bit - the directory mode needs to be set carefully 😉):

$ docker run --rm --mount 'type=tmpfs,dst=/tmp,tmpfs-mode=1777' scratch-tmp-file

Temporary file: /tmp/sample2333717960

And, of course, the other important locations like /home or /var might be missing too!

Pitfall 3: Scratch containers miss CA certificates

Another common use case that might not work as expected in "from scratch" containers is calling other services over HTTPS. Consider this simple snippet that fetches the front page of this blog:

resp, err := http.Get("https://iximiuz.com/")

if err != nil {

panic(err)

}

defer resp.Body.Close()

body, err := io.ReadAll(resp.Body)

if err != nil {

panic(err)

}

fmt.Println("Response", body)

Click here for the complete scenario 👨🔬

Dockerfile:

# syntax=docker/dockerfile:1.4

# -=== Builder image ===-

FROM golang:1 as builder

WORKDIR /app

COPY <<EOF main.go

package main

import (

"fmt"

"io"

"net/http"

)

func main() {

resp, err := http.Get("https://iximiuz.com/")

if err != nil {

panic(err)

}

defer resp.Body.Close()

body, err := io.ReadAll(resp.Body)

if err != nil {

panic(err)

}

fmt.Println("Response", body)

}

EOF

RUN CGO_ENABLED=0 go build main.go

# -=== Target image ===-

FROM scratch

COPY --from=builder /app/main /

CMD ["/main"]

Build it with:

$ docker buildx build -t scratch-https .

When run in a "from scratch" container, it produces the following error:

$ docker run --rm scratch-https

panic: Get "https://iximiuz.com/": x509: certificate signed by unknown authority

goroutine 1 [running]:

main.main()

/app/main.go:12 +0x144

The fix is, again, pretty straightforward - put the certificate authority (CA) certs at some predefined path in the target container. For instance, the up-to-date /etc/ssl/certs/ folder can be copied from the builder stage. But again, very few would be willing to do it manually!

Pitfall 4: Scratch images miss timezone info

What time is it in Amsterdam?

loc, err := time.LoadLocation("Europe/Amsterdam")

if err != nil {

panic(err)

}

fmt.Println("Now in Amsterdam:", time.Now().In(loc))

Click here for the complete scenario 👨🔬

Dockerfile:

# syntax=docker/dockerfile:1.4

# -=== Builder image ===-

FROM golang:1 as builder

WORKDIR /app

COPY <<EOF main.go

package main

import (

"fmt"

"time"

)

func main() {

loc, err := time.LoadLocation("Europe/Amsterdam")

if err != nil {

panic(err)

}

fmt.Println("Now in Amsterdam:", time.Now().In(loc))

}

EOF

RUN CGO_ENABLED=0 go build main.go

# -=== Target image ===-

FROM scratch

COPY --from=builder /app/main /

CMD ["/main"]

Build it with:

$ docker buildx build -t scratch-tz .

Well, the above Go snippet won't tell you that if run in a "from scratch" container:

$ docker run --rm scratch-tz

panic: unknown time zone Europe/Amsterdam

goroutine 1 [running]:

main.main()

/app/main.go:11 +0x140

Similarly to the CA certificates, the timezone information is traditionally stored on disk (e.g., at /usr/share/zoneinfo) and then just looked up by programs at runtime. Since it cannot appear magically in the "from scratch" container, someone needs to put it in the image first (or mount it upon container startup).

Was it the last pitfall of using the scratch base image? I'm not sure. But it'd definitely be enough for me to start thinking of an alternative.

Meet the first distroless image - distroless/static

The intermediate summary of the "from scratch" container pitfalls that I discovered so far looks as follows:

- Scratch containers miss proper user management.

- Scratch containers miss important folders (

/tmp,/home,/var). - Scratch containers miss CA certificates.

- Scratch containers miss timezone information.

And I'm not even sure this list is exhaustive! So, while technically scratch base images remain a valid option to produce slim containers, in reality, using them for production workloads would likely impose significant operational overhead caused by the "incompleteness" of the resulting containers.

However, I still like the idea of putting only the necessary bits into my images. As the above experiments showed, it's not really complicated to come up with a base image that will have the needed files and the directory structure but at the same time won't be a full-blown Linux distro with a package manager and tens (or hundreds) of system libraries. It's just tedious.

And that's where the distroless images come to the rescue!

The idea behind the GoogleContainerTools/distroless project is pretty simple - make a bunch of minimal viable base images (keeping them as close to scratch as possible) and automate the creation procedure. But as always, the devil is in the details 🙈

A good starting point to become familiar with the project's offering is the distroless/static base image:

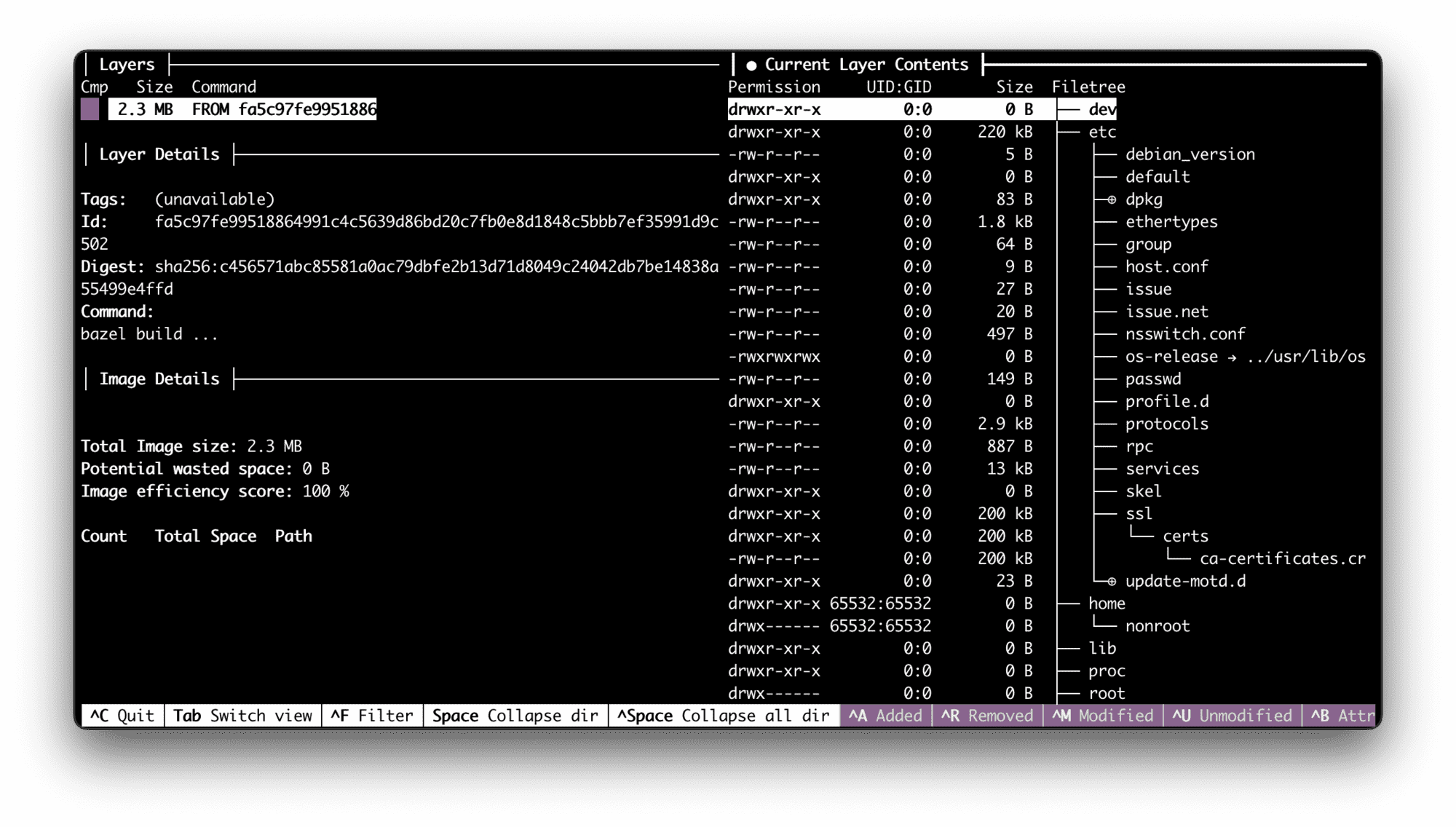

$ docker pull gcr.io/distroless/static

# Inspect it with github.com/wagoodman/dive

$ dive gcr.io/distroless/static

The dive output tells us that:

- The image is Debian-based (so, there is a distro in the distroless image after all, but it's stripped down to the bones).

- It's just ~2MB big and has a single layer (which is just great).

- There is a Linux distro-like directory structure inside.

- The

/etc/passwd,/etc/group, and even/etc/nsswitch.conffiles are present. - Certificates and the timezone db seem to be in place as well.

- Last but not least, the licenses seem to be preserved (but I'm not an expert).

And that's it! So, it's 99.99% static assets (well, there is a tzconfig executable). No packages, no package manager, not even a trace of libc!

Guess what? If I used the gcr.io/distroless/static as a base image (instead of scratch), it'd be a single-line fix for all of the above experiments 🔥 Even the one with the nonroot user because here is how the /etc/passwd file looks like in the distroless image:

root:x:0:0:root:/root:/sbin/nologin

nobody:x:65534:65534:nobody:/nonexistent:/sbin/nologin

nonroot:x:65532:65532:nonroot:/home/nonroot:/sbin/nologin

Not every program is statically linked

A nice by-product of experimenting with "from scratch" containers is that it allows you to learn what is actually needed for a program to run. For a statically linked executable, it seems to be just a bunch of config files and a proper rootfs directory structure. But what would it take for a dynamically linked one?

I'll try to compile this Go program with CGO enabled and then run it on a full-blown Ubuntu distro to see what dynamically-loaded libraries it needs:

package main

import (

"fmt"

"os/user"

)

func main() {

u, err := user.Current()

if err != nil {

panic(err)

}

fmt.Println("Hello from", u.Username)

}

Click here for the complete scenario 👨🔬

Dockerfile:

# syntax=docker/dockerfile:1.4

# -=== Builder image ===-

FROM golang:1 as builder

WORKDIR /app

COPY <<EOF main.go

package main

import (

"fmt"

"os/user"

)

func main() {

u, err := user.Current()

if err != nil {

panic(err)

}

fmt.Println("Hello from", u.Username)

}

EOF

RUN CGO_ENABLED=1 go build main.go

# -=== Target image ===-

FROM ubuntu

COPY --from=builder /app/main /

CMD ["/main"]

Build it with:

$ docker buildx build -t go-cgo-ubuntu .

The mighty ldd should do the trick:

$ docker run --rm go-cgo-ubuntu

Hello from root

$ docker run --rm go-cgo-ubuntu ldd /main

linux-vdso.so.1 (0x0000ffffbe929000)

libpthread.so.0 => /lib/aarch64-linux-gnu/libpthread.so.0 (0x0000ffffbe8d0000)

libc.so.6 => /lib/aarch64-linux-gnu/libc.so.6 (0x0000ffffbe720000)

/lib/ld-linux-aarch64.so.1 (0x0000ffffbe8f0000)

The output looks like a standard set of shared libraries needed for a dynamically linked Linux executable, including libc. But, of course, none of them can be found in the distroless/static image...

Meet the second distroless image - distroless/base

The distroless/static image sounds like a perfect choice for a base image if your program is a statically linked Go binary. But what if you absolutely have to use CGO and the libraries you depend on can't be statically linked (I'm looking at your, glibc)? Or you write things in Rust, or C, or any other compiled language with less perfect support of static builds than in Go?

Meet the distroless/base image!

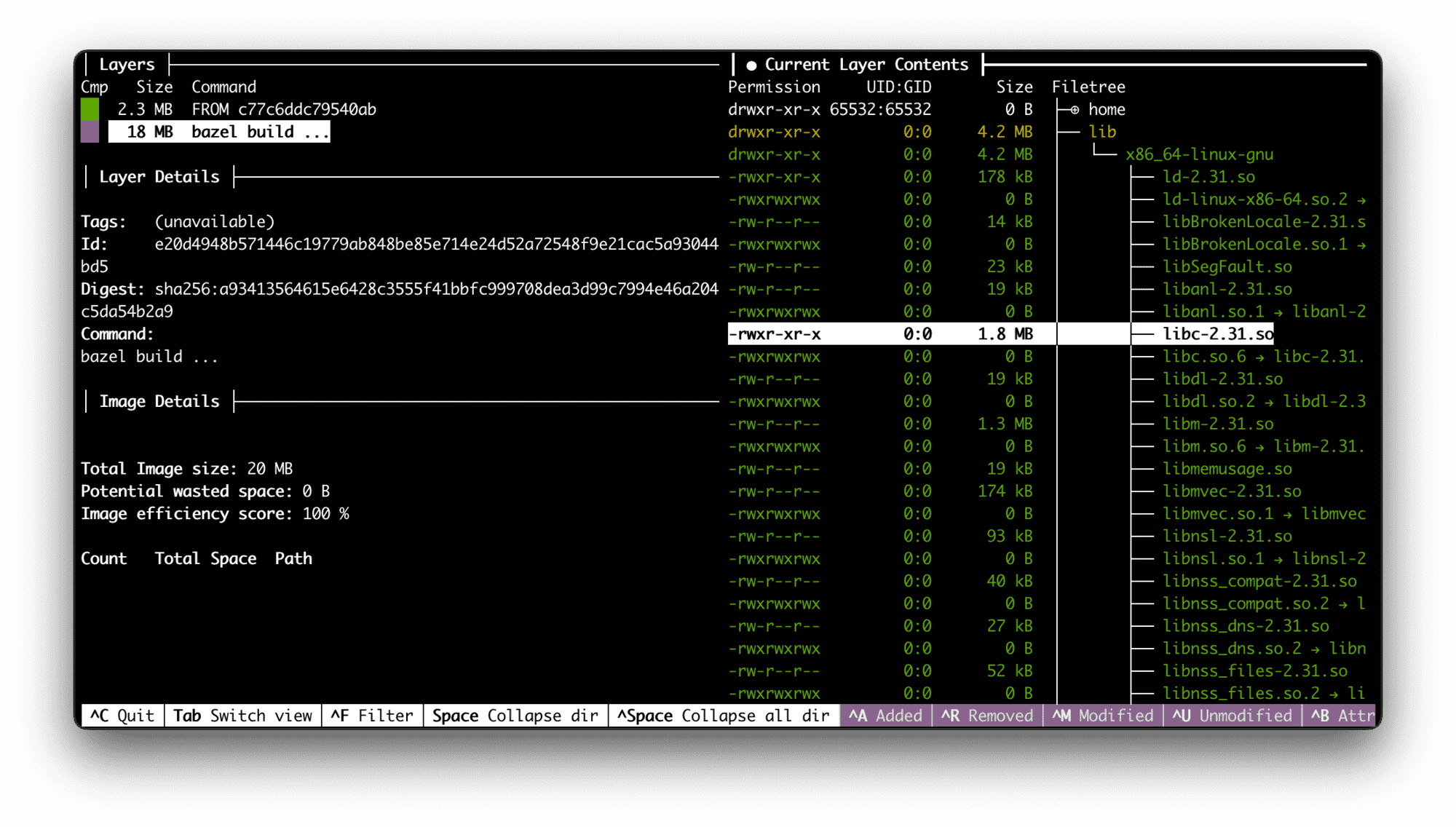

$ docker pull gcr.io/distroless/base

$ dive gcr.io/distroless/base

What the dive output tells us:

- It's 10 times bigger than

distroless/static(but still just ~20MB). - It has two layers (and the first layer IS

distroless/static). - The second layer brings tons of shared libraries - most notably libc and openssl.

- Again, no typical Linux distro fluff.

Here is how to adjust the target Go image to make it work with the new distroless base:

# -=== Target image ===-

FROM gcr.io/distroless/base

COPY --from=builder /app/main /

CMD ["/main"]

Not every dynamically linked use case is the same

I mentioned Rust in the previous section because it's pretty popular these days. Let's see if it can actually work with the distroless/base image. Here is a simple hello-world program:

fn main() {

println!("Hello world! (Rust edition)");

}

Click here for the complete scenario 👨🔬

Dockerfile:

# syntax=docker/dockerfile:1.4

# -=== Builder image ===-

FROM rust:1 as builder

WORKDIR /app

COPY <<EOF Cargo.toml

[package]

name = "hello-world"

version = "0.0.1"

EOF

COPY <<EOF src/main.rs

fn main() {

println!("Hello world! (Rust edition)");

}

EOF

RUN cargo install --path .

# -=== Target image ===-

FROM gcr.io/distroless/base

COPY --from=builder /usr/local/cargo/bin/hello-world /

CMD ["/hello-world"]

Build it with:

$ docker buildx build -t distroless-base-rust .

Let's try to run it:

$ docker run --rm distroless-base-rust

/hello-world: error while loading shared libraries:

libgcc_s.so.1: cannot open shared object file:

No such file or directory

Oh, shoot (again)! Apparently, the distroless/base image doesn't provide all the needed shared libraries! For some reason, Rust has a runtime dependency on libgcc, and it's not present in the container.

Meet the third distroless image - distroless/cc

Apparently, Rust is not so unique in its requirements. This dependency is so common that even a separate base image has been created - distroless/cc:

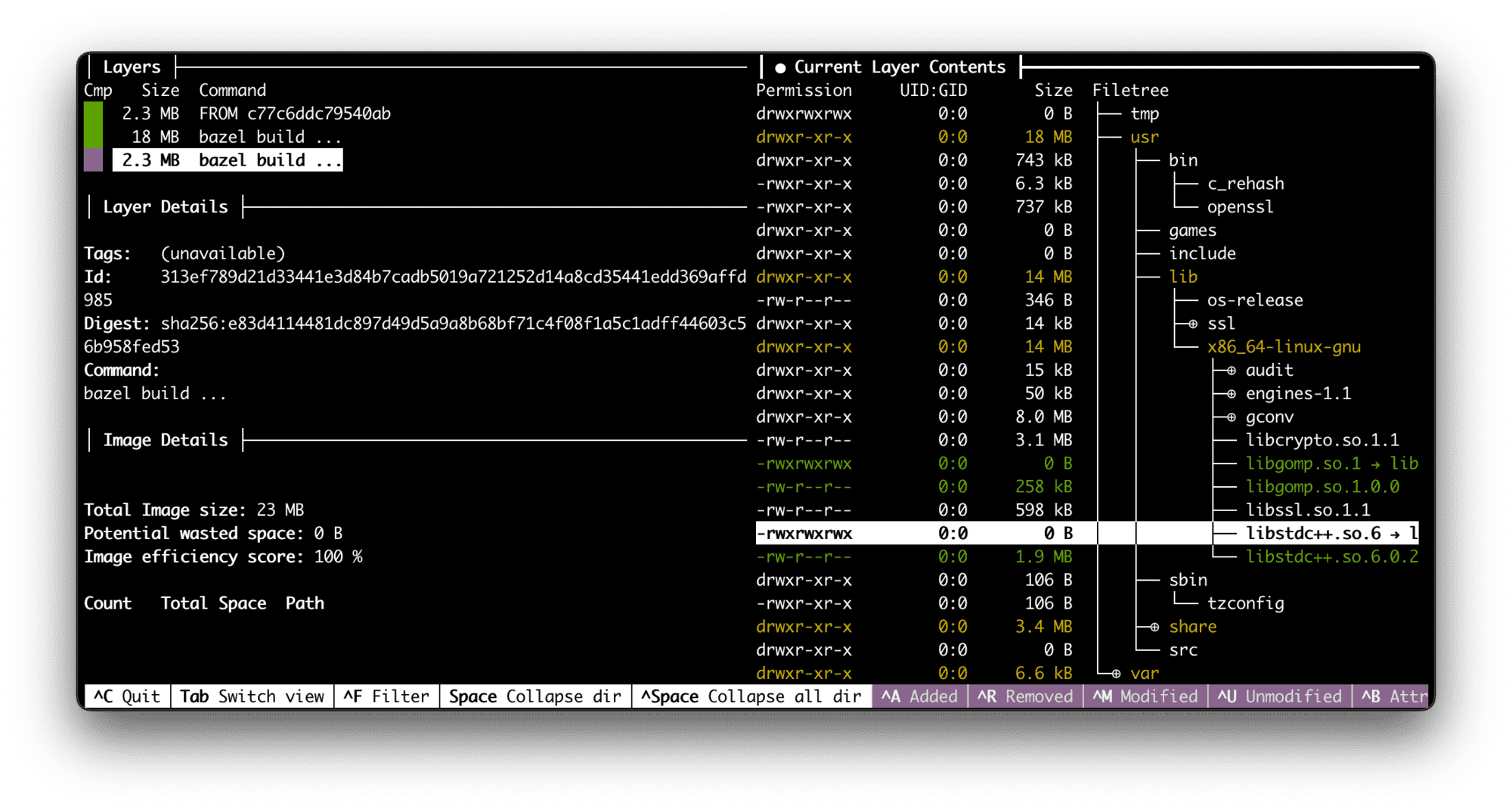

$ docker pull gcr.io/distroless/cc

$ dive gcr.io/distroless/cc

The dive output tells us that:

- It's a three-layered image (based on

distroless/base), - The new layer is just ~2MB big.

- The new layer contains

libstdc++, a bunch of static assets, and even some Python scripts (but no Python itself)!

The fix for the Rust example:

# -=== Target image ===-

FROM gcr.io/distroless/cc

COPY --from=builder /usr/local/cargo/bin/hello-world /

CMD ["/hello-world"]

Base images for interpreted or VM-based languages

Some languages (like Python) require an interpreter for a script to run. Some others (like JavaScript or Java) require a full-blown runtime (like Node.js or JVM). Since the distroless images considered so far lack package managers, adding Python, OpenJDK, or Node.js to them might be problematic.

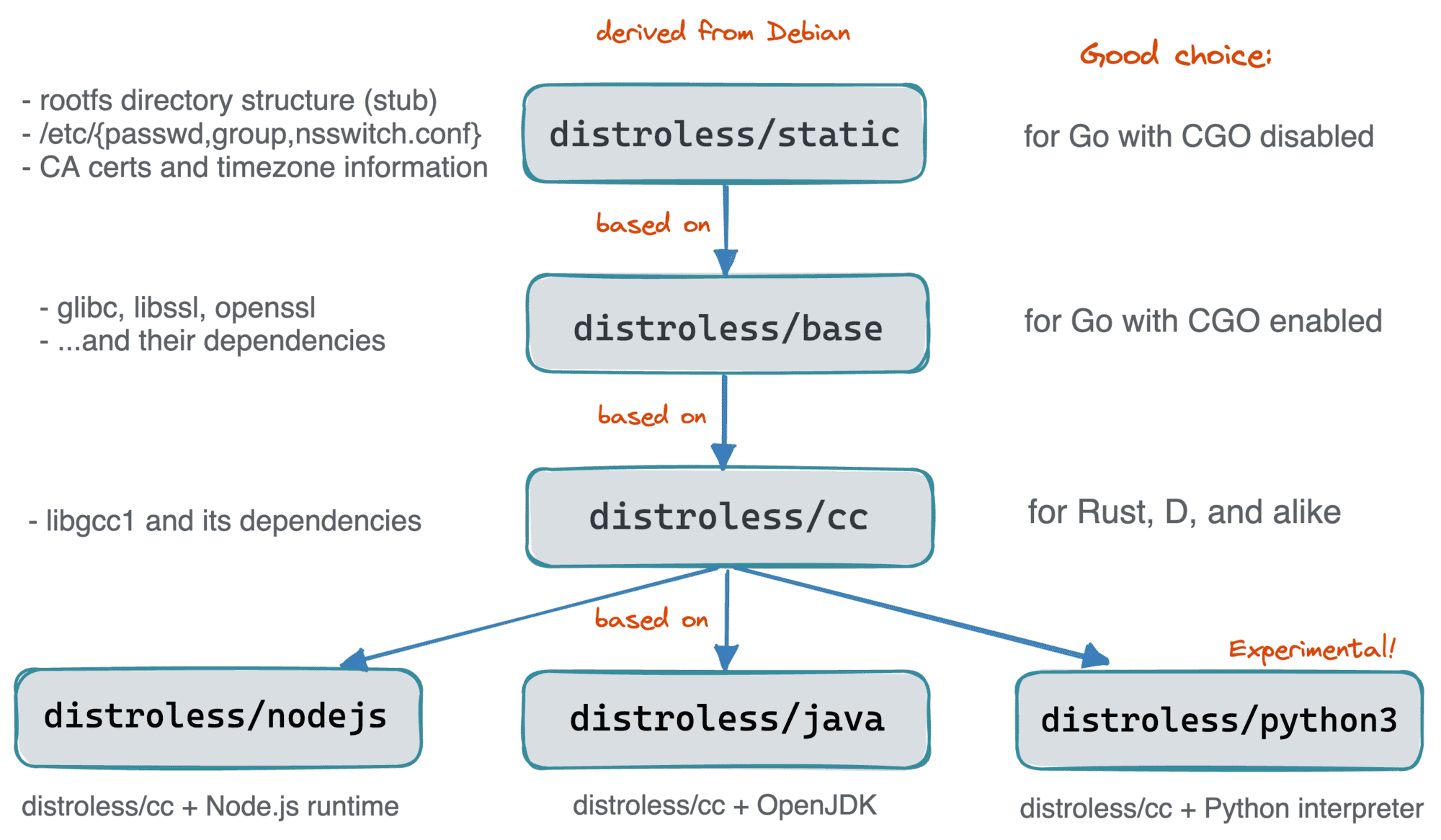

Luckily, the distroless project seems to support the most popular runtimes out of the box:

- Java 11 & 17

- Node.js 14 & 16 & 18

- Python 3 (experimental)

The above base images are built on top of the distroless/cc image, adding extra one-two layers with a corresponding runtime or interpreter.

Here is what the final image hierarchy looks like:

Who uses distroless base images

I use(d)! But only the distroless/static one. It's my favorite. On a more serious note though, I'm aware of the following prominent users: Kubernetes (motivation), Knative, and Kubebuilder.

The ko and Jib projects also use the distroless base making everyone most of their users indirect consumers of distroless.

And, of course, I'll gladly list more use cases here - so please do report!

Pros, cons, and alternatives of distroless images

The distroless images are small, fast, and, potentially, more secure. To me, it's the most important pro. Additionally, since their generation is deterministic, theoretically, it should be possible to encode SBOM(-like) information in every build simplifying life for the vulnerability scanners (but to the best of my knowledge, it's not done yet, and the scanners actually struggle to produce meaningful results for the distroless-based images).

At the same time, this particular implementation of distroless seems to be inflexible. Adding new stuff to a distroless base is tricky: changing the base itself requires knowing bazel (and becoming a fork maintainer?), and adding things later on is complicated by the lack of package managers. The choice of base images is limited by the project maintainers, so if you don't fit, you can't benefit from them.

The distroless base images (automatically) track the upstream Debian releases, so it makes CVE resolution in them as good as it is in the said distro (draw your own conclusion here) and in the corresponding language runtime.

So, my opinion is - the idea is brilliant and much needed, but the implementation might not be the best one.

If you're keen on the idea of carefully crafting your images from some minimal base, you may want to take a look at:

Distroless 2.0 project - uses Alpine as a minimalistic & secure base image, and with the help of two tools, apko and melange, allows to build an application-tailored image containing only (mostly?) the necessary bits.

Chisel - a somewhat similar idea to the above project, but from Canonical, hence, Ubuntu-based. The project seems very new, but Microsoft has already used it in your production.

Multi-stage Docker builds - no kidding! You can still start "from scratch" and carefully copy over only the needed bits from the build stages to your target image.

- buildah - is a powerful tool to build container images that, in particular, allows you to build containers "from scratch", potentially leveraging the host system. Here is an example.

Still want to have minimalistic container images but don't have time for the above wizardry? Then I have a "wizard in the box" for you:

- DockerSlim - a CLI tool that allows you to automatically convert a "fat" container image into a "slim" one by doing a runtime analysis of the target container and throwing away the unneeded stuff.

You can read more about the struggle of producing decent container images in 👉 this article of mine.

Conclusion

Does a container have to have a full-blown Linux distro inside? Well, the short answer is "no". But in reality, it's more involved than just a simple "no" because, while technically functional, pure scratch containers often lack essential bits that we subconsciously expect to be always present (like CA certs or timezones). And the GoogleContainerTools' distroless is one of the projects that try to make scratch images usable for mere mortals.

Resources

- Minimal Container Images: Towards a More Secure Future

- Secure Your Software Factory with melange and apko - aka distroless 2.0

- NET 6 is now in Ubuntu 22.04 - distroless 2.0 but with Ubuntu instead of Alpine base.

- Rebase Kubernetes Main Master and Node Images to Distroless/static

Further reading

Level up your server-side game — join 6,500 engineers getting insightful learning materials straight to their inbox: