I often find myself staring at Nginx or Envoy access logs flooding my screens with real-time data. My only wish at such moments is to be able to aggregate these lines somehow and analyze the output at a slower pace, ideally, with some familiar and concise query language. And to my surprise, I haven't met a tool satisfying all my requirements yet. Well, I should be honest here - I haven't done thorough research. But if there would be a tool as widely known as jq for JSON, I wouldn't miss it probably.

So, here we go - my attempt to write a full-fledged parsing and query engine and master Rust at the same time. Yes, I know, it's a bad idea. But who has time for good ones?

First things first - a usage preview:

Level up your server-side game — join 20,000 engineers getting insightful learning materials straight to their inbox.

The project is heavily influenced by jq and PromQL. Recently, I also learned about angle-grinder so I had a chance to incorporate some ideas from that wonderful but less known tool too.

I somehow tend to see logs as time-series data. So, I want to work with logs as with time series. In an ideal world, all the logs would be aggregated, parsed into fields and metrics, stored in ElasticSearch and Prometheus, and queried from Kibana and Grafana. However, we don't live in such a world just yet. So, I want to be able to quickly parse semi-structured files locally. The parsing result should be a stream of timestamped and strongly typed records. Having such a stream, I'd be able to query it with PromQL-like language. While angle-grinder is quite powerful at parsing and manipulating data, I still find it hardly usable to work with time series. On the contrary, I tried to design pq time-series-first. It has rather basic parsing capabilities, almost no transformation features, but extensive query functionality is a must.

Here is how a typical pq command may look like:

pq '/[^\[]+\[([^]]+)]\s+"([^\s]+)[^"]*?"\s+(\d+)\s+(\d+).*/

| map { .0:ts, .1 as method, .2:str as status_code, .3 as content_len }

| sum(sum_over_time(content_len[1s])) by (method) / 1024'

A program consists of a mandatory decoding step, followed by an optional mapping step, and by another optional query step separated by the | symbol. Below I'll put some screencasted demos, and don't forget to check out the README file for a more technical explanation.

Decoding:

Mapping:

Querying and formatting:

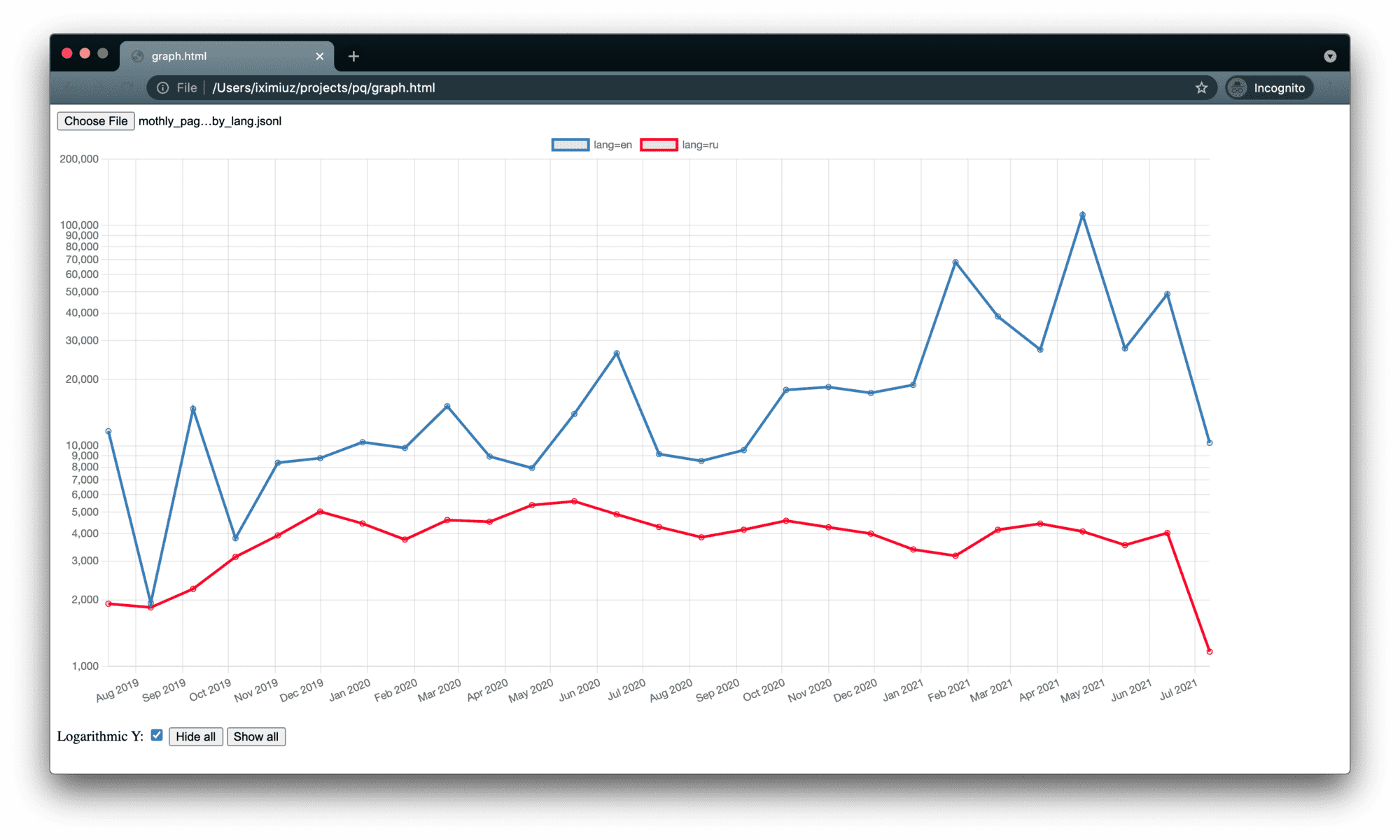

To eat my own dog food, I used pq to analyze the Nginx access.log file of this blog. I hacked a tiny HTML page to visualize the JSON produced by pq:

Monthly page views by post language:

pq '

/([^\s]+).*?\[([^\]]+).*?"([A-Z]+)\s+\/(en|ru)\/posts\/([a-z0-9-]+)\/.*?HTTP.*?(\d+)\s+(\d+)/

| map {

.0 as ip,

.1:ts,

.2 as method,

.3 as lang,

.4 as post,

.5:str as status_code,

.6 as content_len

}

| select sum(

count_over_time(

__line__{method="GET", status_code="200"}[4w]

)

) by (lang)

| to_json'

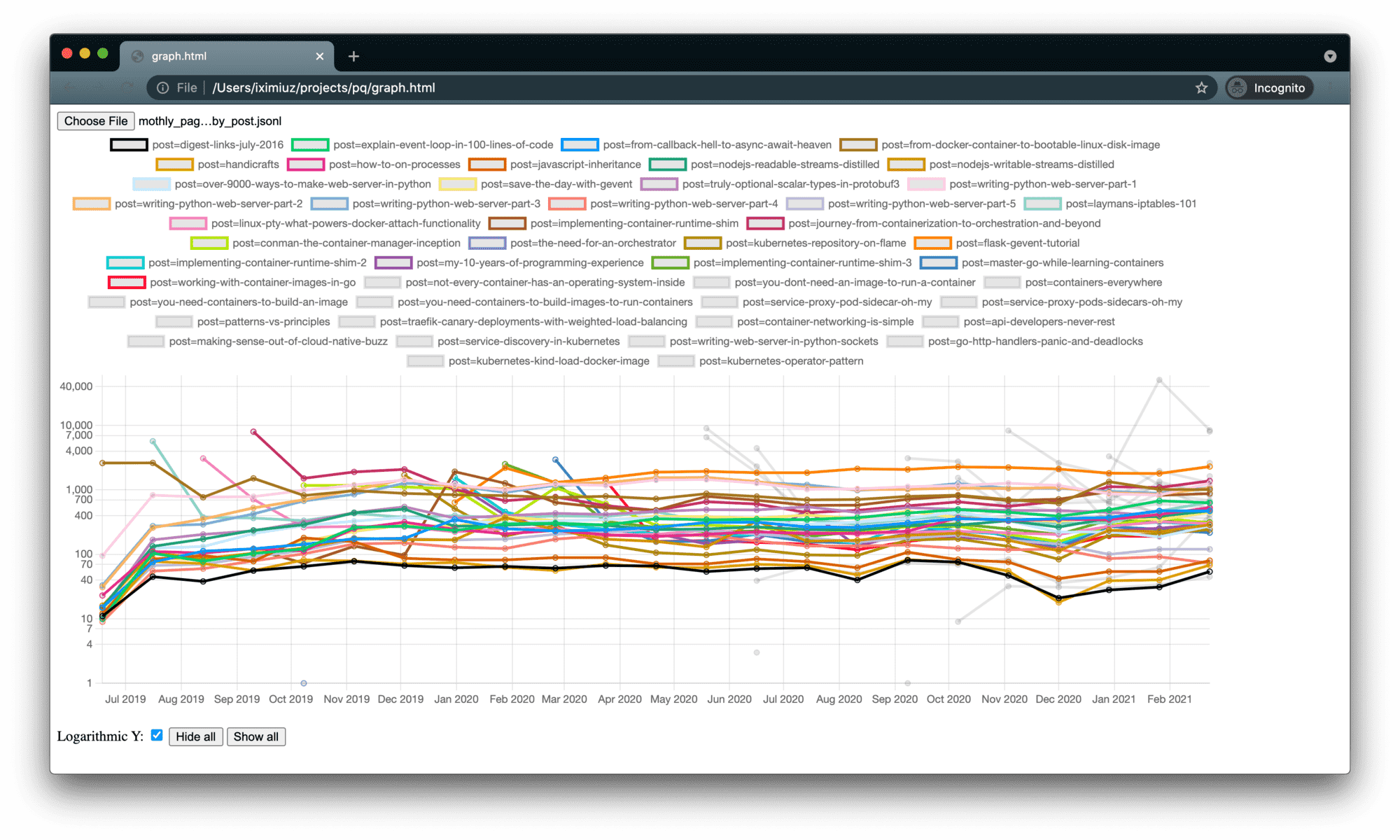

Monthly page views by post:

pq '

/([^\s]+).*?\[([^\]]+).*?"([A-Z]+)\s+\/(en|ru)\/posts\/([a-z0-9-]+)\/.*?HTTP.*?(\d+)\s+(\d+)/

| map {

.0 as ip,

.1:ts,

.2 as method,

.3 as lang,

.4 as post,

.5:str as status_code,

.6 as content_len

}

| select sum(

count_over_time(

__line__{method="GET", status_code="200"}[4w]

)

) by (post)

| to_json

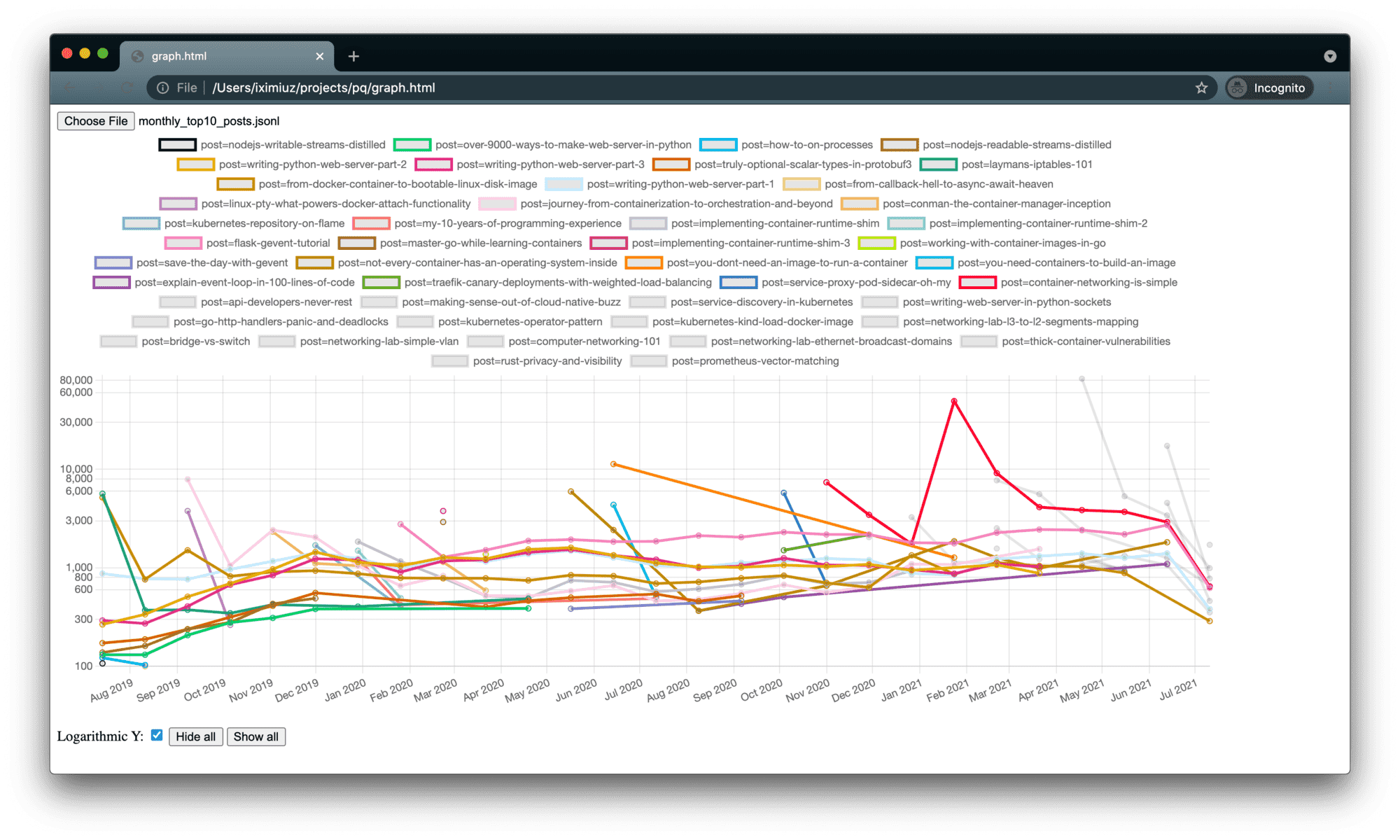

The above graph looks a bit overwhelming, so here is Top 10 posts by monthly page views:

pq '

/([^\s]+).*?\[([^\]]+).*?"([A-Z]+)\s+\/(en|ru)\/posts\/([a-z0-9-]+)\/.*?HTTP.*?(\d+)\s+(\d+)/

| map {

.0 as ip,

.1:ts,

.2 as method,

.3 as lang,

.4 as post,

.5:str as status_code,

.6 as content_len

}

| select topk(

10,

sum(

count_over_time(

__line__{method="GET", status_code="200"}[4w]

)

) by (post)

)

| to_json'

While working on pq, I often needed to dig into Prometheus code for better understanding of some query logic. So, if you deal with PromQL, I tried to share some of my findings on the way:

- Prometheus Cheat Sheet - Metrics, Labels, Scrapes, Instant Vector, etc

- Prometheus Cheat Sheet - Moving Average, Max, Min, etc (Aggregation Over Time)

- Prometheus Cheat Sheet - How to Join Multiple Metrics (Vector Matching)

Fun fact

I've been starting to write this tool several times already. The first attempt was somewhere in 2014, but I didn't manage to produce anything meaningful then. In 2016, I started the echelon0 project, and it happily coincided with a wonderful time of becoming a father of a little girl. Obviously, I didn't get any spare time for this side project. In 2019 I finally got a chance to work on it again, this time under the codename amnis. However, I overcomplicated it right from the beginning. The design was so powerful and all-around that I never got to the code. And finally, pq resulted in something more or less functional.

Cheers!

Level up your server-side game — join 20,000 engineers getting insightful learning materials straight to their inbox: