I strive to produce concise but readable code. One of my favorite tactics - minimizing the number of local variables - usually can be achieved through minting or discovering higher-level abstractions and joining them together in a more or less declarative way.

Thus, when writing Go code, I often utilize io.Reader and io.Writer interfaces and the related io machinery. A function like io.Copy(w io.Writer, r io.Reader) can be a perfect example of such a higher-level abstraction - it's a succinct at devtime and efficient at runtime way to move some bytes from the origin r to the destination w.

But conciseness often comes at a price, especially in the case of I/O handling - getting a sneak peek at the data that's being passed around becomes much trickier in the code that relies on the Reader and Writer abstractions.

So, is there an easy way to see the data that comes out of readers or goes into writers without a significant code restructure or an introduction of temporary variables?

Level up your server-side game — join 20,000 engineers getting insightful learning materials straight to their inbox.

Pipelines and T-splitters

When I stumbled upon this problem in Go for the first time, it immediately reminded me of another similar problem I'd come across years ago. As a Linux person, I often construct multi-command pipelines to process data right in the terminal:

... | grep | sed | sort | uniq | ...

>----->------>----->------>------>----->

[stdin] [stdout]

In the above pipeline, the stdout stream of every but the last command is piped into the stdin stream of the following command, and the output of the very last command is dumped onto the screen. In other words, the data flow in the pipeline is linear.

Continuing the plumbing analogies, if I needed to take a look at the data from an intermediate step of the pipeline while keeping the full pipeline running, I'd need to attach kinda sorta T-splitter. And as it usually happens, there is already a shell command for that called T [tee]!

... | grep | sed | tee tmp.log | sort | uniq | ...

>----->------>----->------┬------>------>------>----->

[stdin] | [stdout]

|

|

v

[tmp.log]

Much like a real T-splitter, the tee command can be injected at any position in a pipeline connecting the stdout of its preceding command with the stdin of the succeeding command and copying the passed through data to a file.

Since using T-splitters is a common pattern when dealing with I/O streams, it'd be reasonable to expect that the Go standard library should be providing similar capabilities. And indeed, a quick search for the tee word on the io package docs page resulted in a few matches! Turns out, Go's io package comes with tee-like functionality included. However, it's a bit more fine-grained than its shell ancestor.

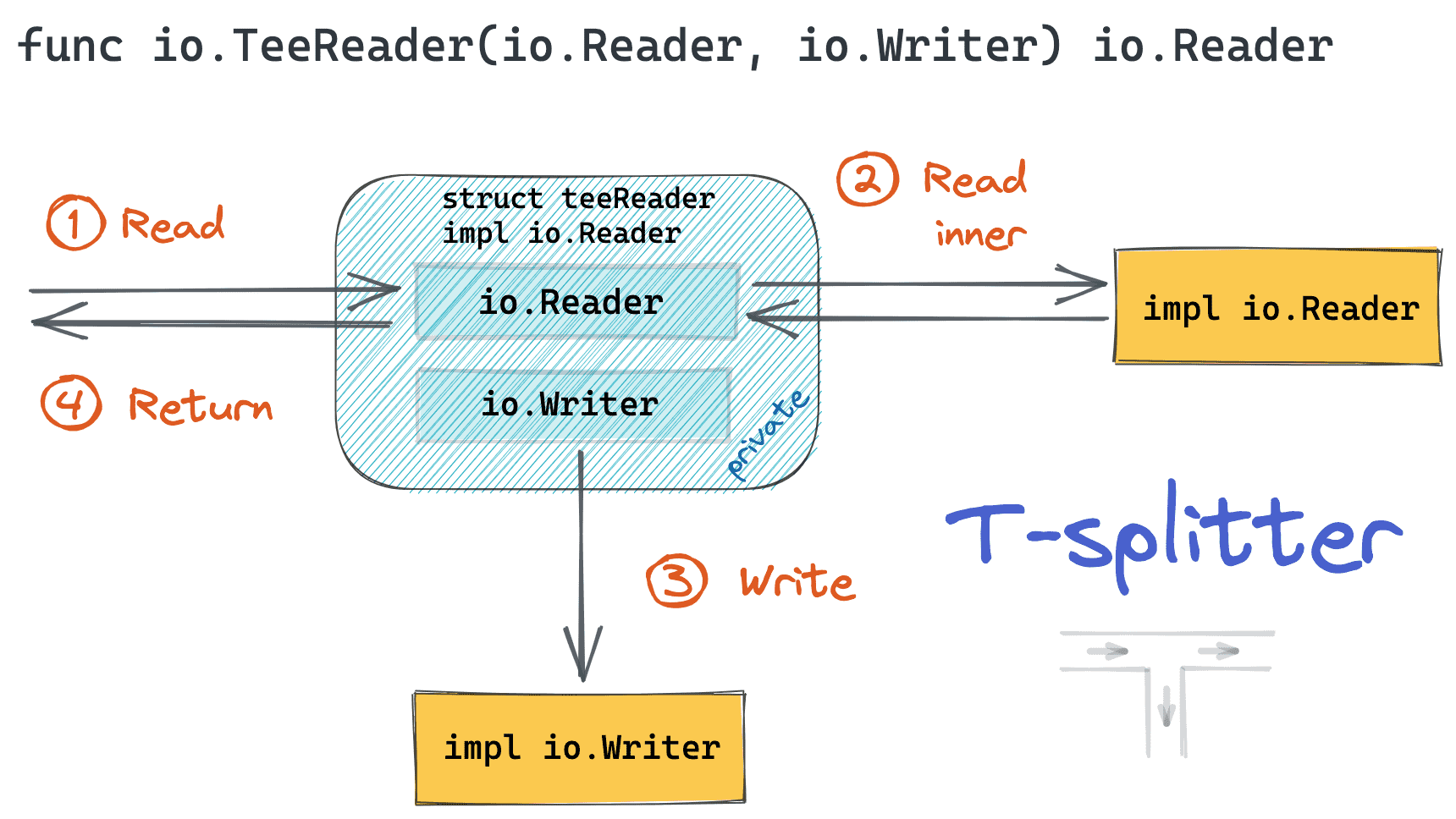

io.TeeReader

In Go, attaching a T-splitter to an io.Reader is different from attaching a T-splitter to an io.Writer. Let's see how it can be done in both cases.

Starting from the io.Reader case - there is a utility function io.TeeReader that wraps an existing reader into a thin auxiliary structure that copies everything read from the original reader to a specified writer:

// TeeReader returns a Reader that writes to w what it reads from r.

// ...

func TeeReader(r Reader, w Writer) Reader

Expand to see the code of TeeReader - it's actually pretty simple!

Taken from src/io/io.go:

type teeReader struct {

r Reader

w Writer

}

func TeeReader(r Reader, w Writer) Reader {

return &teeReader{r, w}

}

func (t *teeReader) Read(p []byte) (n int, err error) {

n, err = t.r.Read(p)

if n > 0 {

if n, err := t.w.Write(p[:n]); err != nil {

return n, err

}

}

return

}

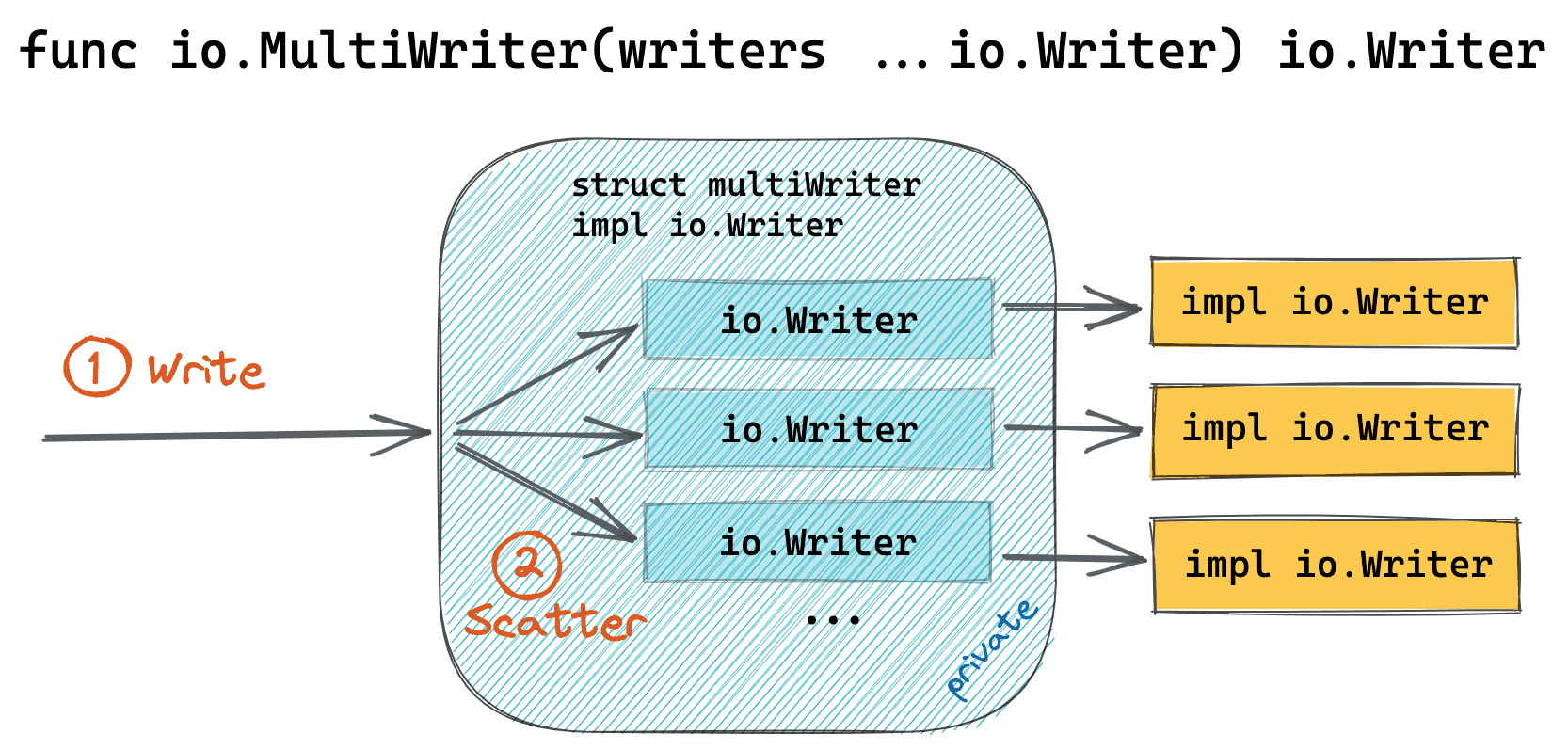

io.MultiWriter

The writer's case is not really T-shaped, but still, it resembles some plumbing work. An arbitrary number of io.Writers can be joined into a single io.Writer instance using the io.MultiWriter utility function:

Notice that the Go documentation for the MultiWriter does mention the Unix tee utility:

// MultiWriter creates a writer that duplicates its writes to all the

// provided writers, similar to the Unix tee(1) command.

// ...

func MultiWriter(writers ...Writer) Writer

Expand to see the code of MultiWriter - it's a bit more verbose but still a piece of cake.

Taken from src/io/multi.go:

type multiWriter struct {

writers []Writer

}

func MultiWriter(writers ...Writer) Writer {

allWriters := make([]Writer, 0, len(writers))

for _, w := range writers {

if mw, ok := w.(*multiWriter); ok {

allWriters = append(allWriters, mw.writers...)

} else {

allWriters = append(allWriters, w)

}

}

return &multiWriter{allWriters}

}

func (t *multiWriter) Write(p []byte) (n int, err error) {

for _, w := range t.writers {

n, err = w.Write(p)

if err != nil {

return

}

if n != len(p) {

err = ErrShortWrite

return

}

}

return len(p), nil

}

io.TeeReader and io.MultiWriter example

Here is an example of a real-world program I was debugging a couple of days ago using the tee-trick. Of course, I stripped it to the bones to keep the focus on the relevant parts:

package main

import (

"encoding/json"

"net/http"

)

func main() {

http.HandleFunc("/", func(w http.ResponseWriter, r *http.Request) {

// The request structure is non-trivial.

// E.g., has many optional and nested fields.

request := make(map[string]interface{})

json.NewDecoder(r.Body).Decode(&request)

// The actual response creation logic is

// hidden behind many layers of abstraction.

response := map[string]string{

"message": "Looks very good!"

}

json.NewEncoder(w).Encode(response)

})

http.ListenAndServe(":8888", nil)

}

My goal was to get the actual request and response bodies dumped to the stdout without any significant code reshaping. So, after applying the tee-trick, the code started looking as follows:

package main

import (

"encoding/json"

"io"

"net/http"

"os"

)

func main() {

http.HandleFunc("/", func(w http.ResponseWriter, r *http.Request) {

request := make(map[string]interface{})

// 👉 io.TeeReader

json.NewDecoder(io.TeeReader(r.Body, os.Stdout)).

Decode(&request)

response := map[string]string{

"message": "Looks very good!"

}

// 👉 io.MultiWriter

json.NewEncoder(io.MultiWriter(os.Stdout, w)).

Encode(response)

})

http.ListenAndServe(":8888", nil)

}

Essentially, the change was a one-liner. Test it out with:

$ go run server.go &

$ curl -X POST -d '{"foo": "bar"}' localhost:8888

Instead of conclusion

One of the things I enjoyed the most while playing with this code was the elegance of the Go standard library. Adding tee-like functionality didn't bloat the io package with unnecessary type definitions - the teeReader and multiWriter are just hidden private structs, while the public API surface was merely extended with two plain functions that operate in terms of the basic io.Reader and io.Writer interfaces. Full reuse of the existing concepts!

However, the main takeaway here is probably unrelated to the blog post's subject - often, an ability to notice a conceptual pattern compensates for the lack of knowledge of the standard library of the language at hand.

Level up your server-side game — join 20,000 engineers getting insightful learning materials straight to their inbox: